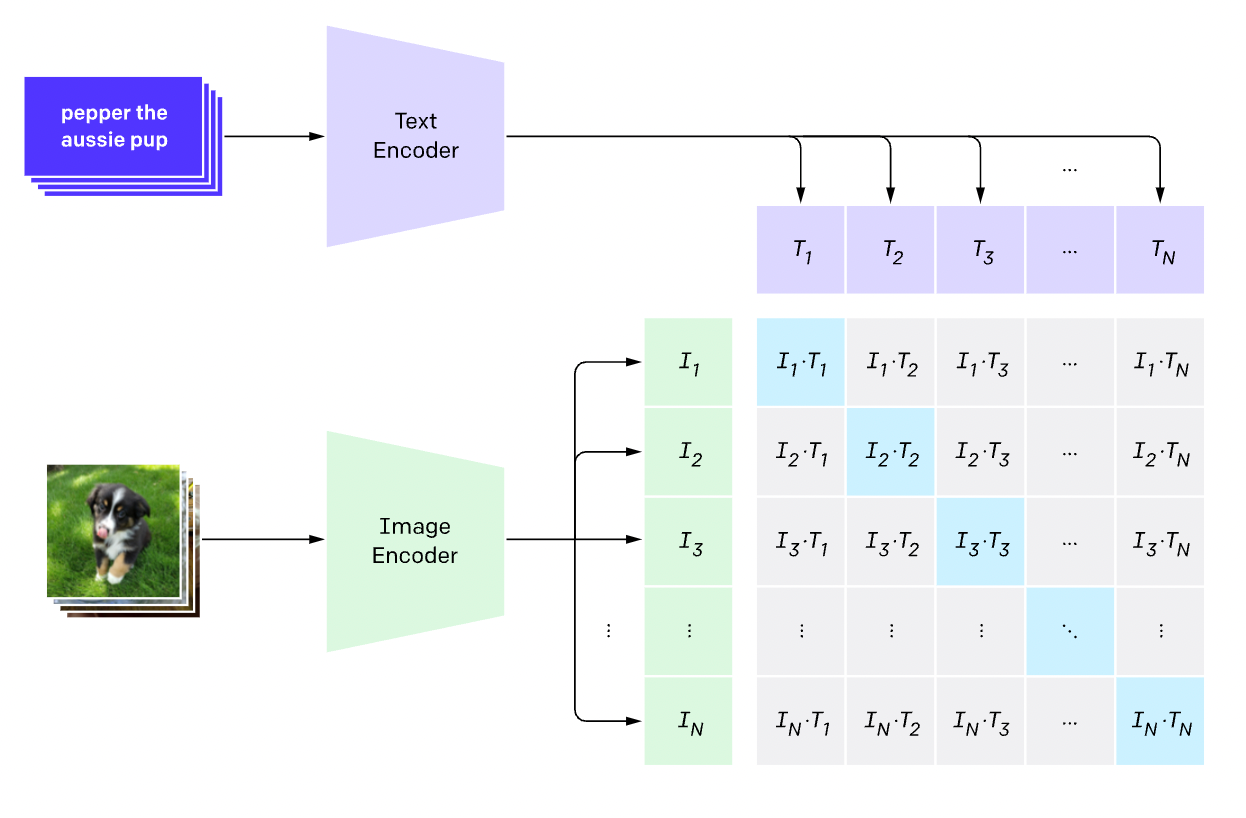

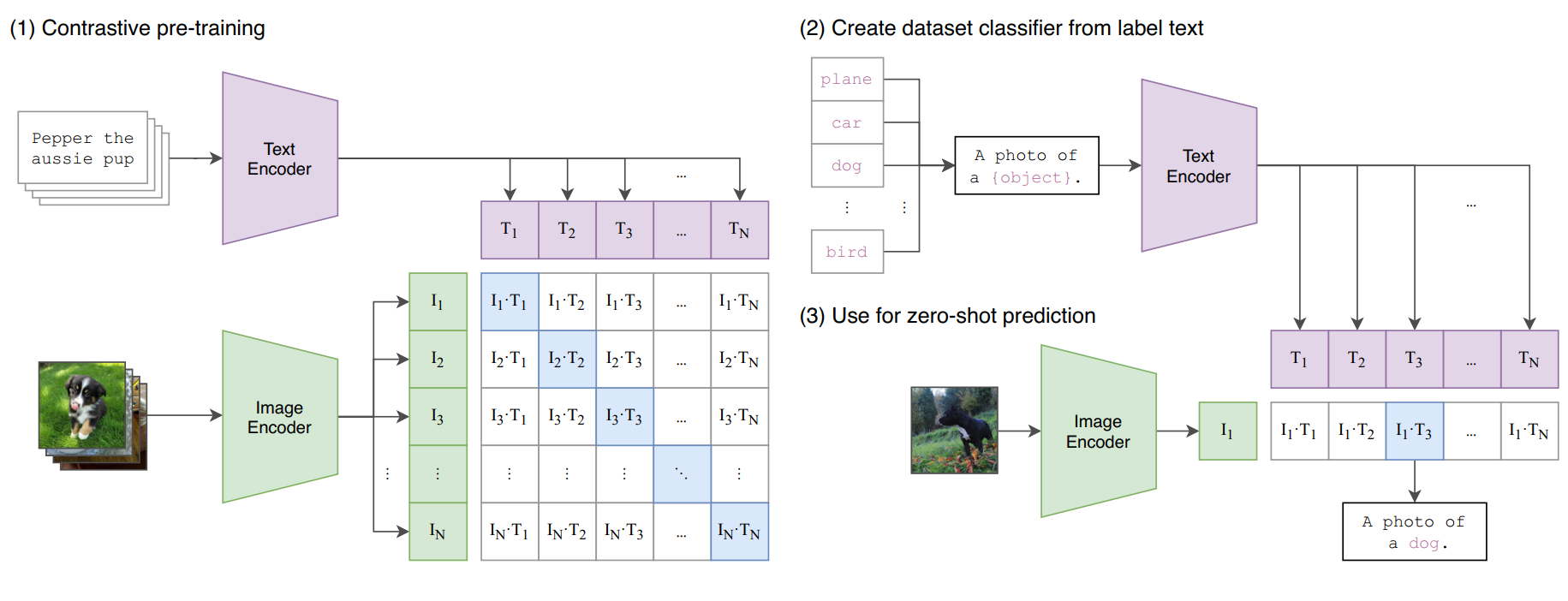

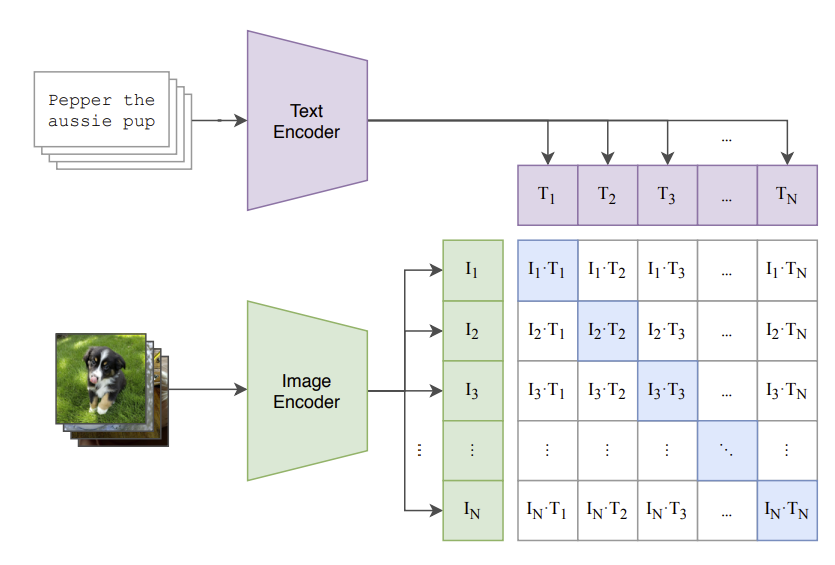

GitHub - openai/CLIP: CLIP (Contrastive Language-Image Pretraining), Predict the most relevant text snippet given an image

Aman Arora on X: "Excited to present part-2 of Annotated CLIP (the only 2 resources that you will need to understand CLIP completely with PyTorch code implementation). https://t.co/L0RHsvixcd As part of this

![P] train-CLIP: A PyTorch Lightning Framework Dedicated to the Training and Reproduction of Clip : r/MachineLearning P] train-CLIP: A PyTorch Lightning Framework Dedicated to the Training and Reproduction of Clip : r/MachineLearning](https://external-preview.redd.it/IQi6Y8-dVcANDKE148AmnqA7rVrILTQia0DO4wJVsls.jpg?auto=webp&s=7651907ebaa5fd5f41e2d09cdb4906baf31c971a)

P] train-CLIP: A PyTorch Lightning Framework Dedicated to the Training and Reproduction of Clip : r/MachineLearning

GitHub - weiyx16/CLIP-pytorch: A non-JIT version implementation / replication of CLIP of OpenAI in pytorch

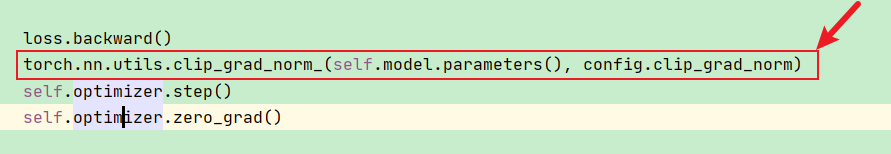

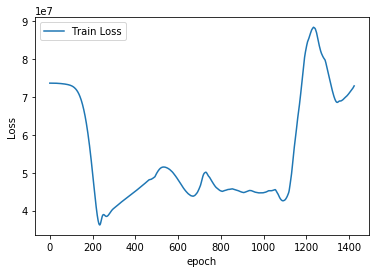

The Difference Between PyTorch clip_grad_value_() and clip_grad_norm_() Functions | James D. McCaffrey

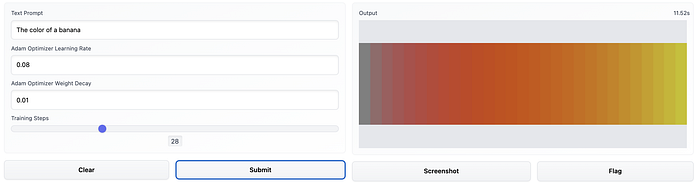

Explaining the code of the popular text-to-image algorithm (VQGAN+CLIP in PyTorch) | by Alexa Steinbrück | Medium

Contrastive Language–Image Pre-training (CLIP)-Connecting Text to Image | by Sthanikam Santhosh | Medium